🧠 “Can we trust the machine that decides who gets a loan? The algorithm that screens job candidates? Or the AI that predicts who might commit a crime?”

Artificial intelligence is no longer confined to research labs or sci-fi movies. It’s in our phones, cars, classrooms, and hospitals — quietly influencing decisions that shape our lives.

But as AI grows more powerful and pervasive, one question rises louder than ever:

Can we really trust it?

🌍 The Age of Intelligent Machines

AI now helps doctors detect cancer, powers language models like ChatGPT, drives autonomous vehicles, and even composes symphonies. It’s impressive — and at times, almost unsettling.

The reason? AI doesn’t just automate tasks anymore. It makes decisions.

And when those decisions affect human lives, trust becomes the foundation of everything.

As Sundar Pichai, CEO of Google, famously said:

“AI is one of the most important things humanity is working on. It’s more profound than fire or electricity.”

If that’s true — then building trustworthy AI isn’t just a technical challenge. It’s a moral one.

🤔 What Does It Mean to “Trust” AI?

When we talk about “trust” in human terms, we think of reliability, honesty, and predictability. With AI, it’s a bit more complicated.

To trust AI, we must believe it is:

- Transparent – We understand how it makes decisions.

- Fair – It treats everyone equally and without bias.

- Safe – It performs reliably under all conditions.

- Accountable – Someone is responsible for its actions.

In essence, trustworthy AI should behave ethically, consistently, and explainably.

But here’s the twist: even well-intentioned AI systems can go wrong — often in ways their creators never anticipated.

⚠️ When Trust Was Broken

Let’s look at a few real-world examples that shook public confidence in AI.

🧍♀️ The Biased Hiring Algorithm

In 2018, Amazon scrapped an internal AI recruiting tool after discovering it discriminated against women. The system, trained on past hiring data, “learned” that most successful candidates were male — and penalized resumes that even mentioned “women’s” activities.

The algorithm wasn’t evil. It was biased by history.

🚓 Predictive Policing and Justice

In the U.S., an AI system called COMPAS was used to predict the likelihood of criminal reoffending. Later investigations revealed it often rated Black defendants as higher risk than white defendants with similar records.

The outcome? AI amplified existing human biases — but behind a veil of “objectivity.”

🚗 The Self-Driving Dilemma

Tesla’s Autopilot system, despite its remarkable capabilities, has faced multiple fatal crashes. Each case reignites the debate: can we trust a machine with human lives when it makes split-second decisions?

These stories underline a critical truth: AI reflects us — our data, our choices, our blind spots.

🧩 The Quest for Responsible Machines

So, how do we build AI that deserves our trust?

Experts often refer to three pillars of Responsible AI — the framework guiding companies, researchers, and governments worldwide.

1. Explainability: The “Why” Behind Decisions

AI must be interpretable.

If an AI denies someone a loan or flags them as a security risk, there should be a clear, human-understandable explanation.

This is often achieved through techniques like Explainable AI (XAI), which translates complex neural network outputs into traceable reasoning.

2. Robustness: Reliability in the Real World

An AI that performs flawlessly in a lab might fail spectacularly in real-world conditions. Robust AI is stress-tested across diverse data, edge cases, and environments — ensuring it’s resilient, not just accurate.

3. Alignment: Teaching AI Our Values

AI alignment ensures that machines pursue goals aligned with human values — not just mathematical objectives.

For example, an AI optimizing for “engagement” on social media might unintentionally promote outrage or misinformation. Alignment means programming it to value truth, fairness, and wellbeing instead.

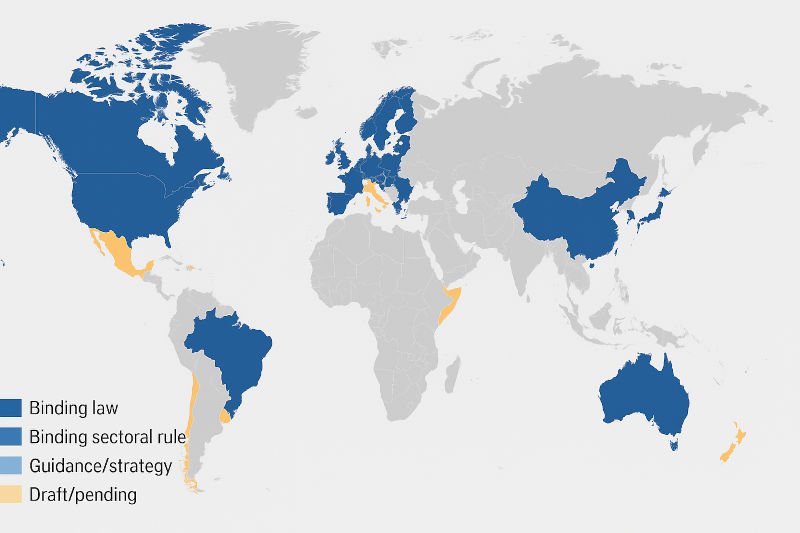

🌐 Global Efforts to Regulate and Reinforce Trust

Trustworthy AI isn’t just a tech industry buzzword — it’s now a matter of international policy.

Here’s how the world is stepping up.

🇪🇺 The EU AI Act: Setting the Global Standard

Passed in 2024, the EU AI Act is the first comprehensive legal framework for artificial intelligence. It categorizes AI systems based on risk level — from minimal to unacceptable.

- High-risk AI (used in hiring, law enforcement, healthcare) faces strict obligations: transparency, data quality, and human oversight.

- Unacceptable AI, such as real-time facial recognition in public or social scoring systems, is outright banned.

- Companies violating the Act could face fines of up to €35 million or 7% of global turnover.

Why it matters:

The EU is building a global template for “AI built with responsibility by design.” Other nations — like Canada, Brazil, and Japan — are already considering similar frameworks.

🧪 OpenAI’s Red Teaming and Alignment Research

Before releasing models like GPT-4 or Sora, OpenAI conducts “red teaming” — a practice borrowed from cybersecurity.

Here’s how it works:

- Internal and external experts try to break the model, pushing it to reveal weaknesses, unsafe responses, or bias.

- The findings are used to reinforce guardrails and safety systems.

- Combined with Reinforcement Learning from Human Feedback (RLHF), this process helps align AI behavior with human intentions.

Additionally, OpenAI has created the Alignment Research Center, focusing on the long-term risks of advanced AI — especially around autonomy, deception, and safety.

Why it matters:

This approach treats AI safety testing as seriously as aviation safety — identifying risks before takeoff.

⚙️ IEEE’s Ethical Standards for AI

The Institute of Electrical and Electronics Engineers (IEEE) — one of the world’s largest engineering bodies — has created a series of global standards for AI ethics, known as the IEEE 7000 Series.

Some key standards include:

- IEEE 7001 – Transparency in autonomous systems

- IEEE 7003 – Algorithmic bias considerations

- IEEE 7010 – Well-being impact assessment

- IEEE 7012 – Machine Readable Personal Privacy Terms

Why it matters:

These standards give engineers a practical code of ethics — transforming abstract ideals into measurable design requirements.

🔒 The Paradox of Trust: Earning It Back

Here’s the paradox:

We trust AI because it seems objective, yet it learns from data that’s deeply human — and therefore flawed.

AI doesn’t choose its values. We do.

Which means every biased dataset, every shortcut in testing, every decision made for speed over safety — ultimately reflects us.

Building trustworthy AI isn’t about perfect algorithms. It’s about responsible choices made by developers, companies, and policymakers.

🚀 The Road Ahead: From Fear to Confidence

The good news?

We’re already moving toward a more ethical, transparent AI future.

- Tech giants are releasing model cards and transparency reports.

- Startups are focusing on ethical data sourcing.

- Universities are teaching AI ethics alongside coding.

- Policymakers are demanding AI audits before deployment.

But the most powerful step isn’t technological — it’s cultural.

We must foster a mindset where “responsibility” isn’t a box to tick, but the core of innovation itself.

🌟 Why This Matters — For Everyone

AI will continue to evolve — faster, smarter, and more autonomous. Whether that evolution benefits humanity or undermines it depends on how much we trust, and verify, the machines we create.

Trustworthy AI isn’t just about preventing harm. It’s about unlocking AI’s full potential — in ways that are safe, fair, and universally beneficial.

Because only when we trust AI, can we truly collaborate with it.

💡 Final Thoughts

AI is not destiny. It’s a reflection — of our data, our designs, and our decisions.

We stand at a defining moment:

Will we let AI evolve unchecked, or will we shape it to reflect the best of us?

Trust is the bridge between fear and progress.

And building that bridge starts with one principle:

Responsible AI isn’t a luxury — it’s a necessity.

📌 Key Takeaways

- Trustworthy AI relies on transparency, fairness, safety, and accountability.

- Global frameworks like the EU AI Act, OpenAI’s red teaming, and IEEE standards are leading the way.

- Responsibility must be embedded in every stage of AI development — not added afterward.

- The future of AI will depend not just on innovation, but on ethics, oversight, and human intent.

🔗 Suggested Reading

- EU Artificial Intelligence Act – Official Overview (europa.eu)

- OpenAI Alignment Research Center

- IEEE 7000 Series Ethical Standards

Watch our video: