What if your computer could tell when you were stressed, happy, or even a little frustrated — just by looking at you or hearing your voice?

It sounds like science fiction, but it’s happening right now.

Artificial intelligence has learned not just to recognize faces or words, but to understand feelings.

This emerging field — known as Affective Computing or Emotion AI — is changing how we interact with machines, from how we drive cars to how we learn, work, and even play games.

We’ll explore how AI can detect emotions, the science behind it, real-world use cases, privacy debates, and what the future might look like when technology truly understands human emotion.

🧠 What Is Emotion AI?

At its core, Emotion AI (or Affective Computing) is about teaching machines to recognize and interpret human emotions.

The idea was first introduced by Rosalind Picard at the MIT Media Lab in the 1990s. Her vision was simple yet profound:

“To make computers genuinely intelligent, they must have the ability to understand — and respond to — human emotions.”

Emotion AI works by analyzing facial expressions, vocal tone, body language, and sometimes even physiological signals like heart rate or micro-movements.

Through deep learning models trained on thousands of emotional samples, AI can now infer states like joy, anger, sadness, surprise, disgust, and fear — often faster and more consistently than the average human observer.

🎭 How AI Reads Your Face and Voice

So, how does AI “see” your emotions? Let’s break it down.

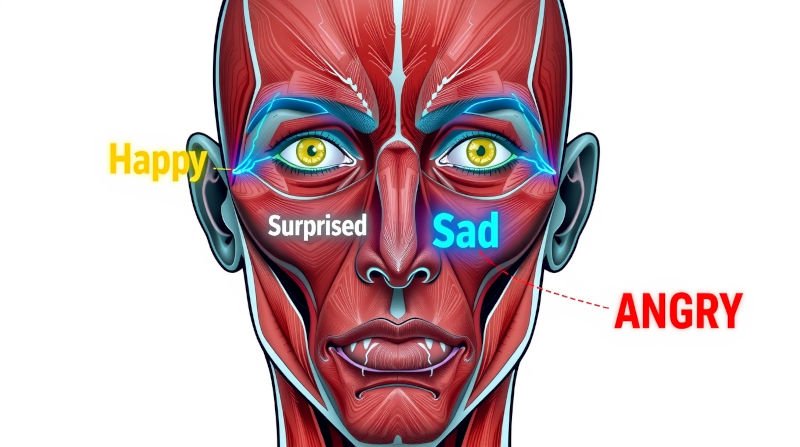

1. Facial Emotion Recognition

AI systems use computer vision to analyze key landmarks on your face — the corners of your mouth, your eyebrows, the curvature of your eyes.

A neural network compares these micro-expressions to massive labeled datasets (like FER2013 or AffectNet) to infer emotion probabilities.

For example:

- Raised cheeks + lip corners pulled up = Happiness

- Brow furrowed + eyes narrowed = Anger

- Drooped eyelids + downward lips = Sadness

This analysis happens in milliseconds — a single webcam frame can reveal far more than we realize.

2. Voice Emotion Recognition

Your voice carries emotional cues through tone, pitch, rhythm, and speed.

AI listens to speech patterns and uses audio signal processing combined with natural language understanding to interpret mood.

Think about it — even if you say the same sentence, “I’m fine,” the emotion in your voice might reveal whether you’re actually okay or holding back frustration.

Companies like Affectiva, Beyond Verbal, and Amazon Rekognition are leading the way in multimodal emotion analysis — combining facial, vocal, and contextual cues for a more holistic understanding.

⚙️ Real-World Examples You Can Try

Here’s where it gets exciting — you can actually try this yourself!

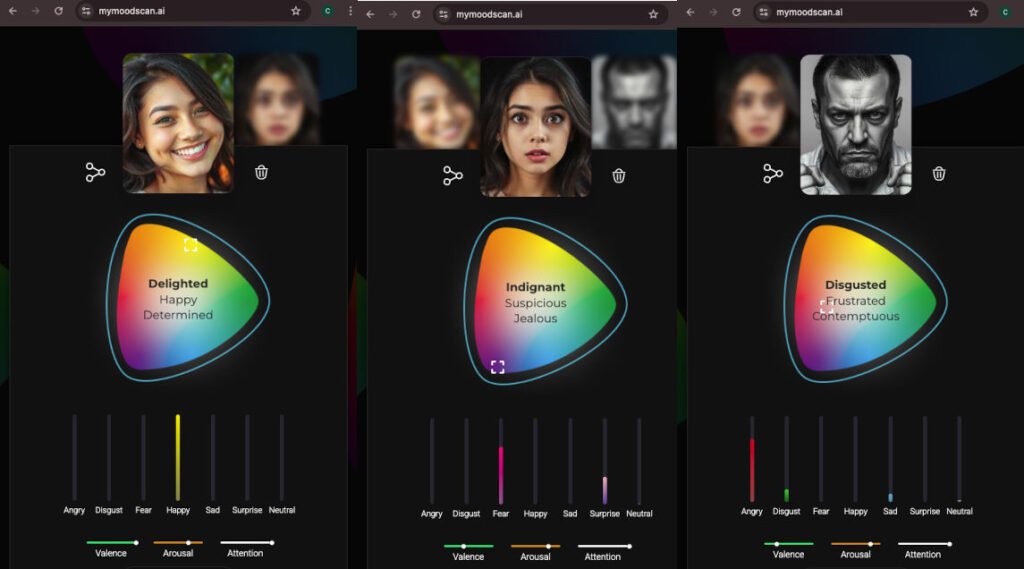

🖼️ 1. MorphCast MyMoodScan

A free, web-based tool that lets you upload a selfie or use your webcam to instantly detect emotions.

It displays results like Happy: 89%, Surprised: 10%, Neutral: 1% — a fun and social-media-friendly demo of what’s possible.

👉 Try it here: MorphCast MyMoodScan

🔒 2. Amazon Rekognition

Amazon Rekognition is a cloud-based image and video analysis service that makes it easy to add advanced computer vision capabilities to your applications.

☁️ 3. Microsoft Face API

The Microsoft Face API uses state-of-the-art cloud-based face algorithms to detect and recognize human faces in images. Capabilities include features like face detection, face verification, and face grouping.

🚗 AI That Feels Your Mood — Everyday Applications

Emotion AI isn’t confined to labs anymore. It’s showing up in surprising places:

1. Driver Monitoring Systems

Modern vehicles use cameras to track driver alertness.

If you’re yawning, blinking too often, or looking away for too long, AI can detect fatigue or distraction and sound an alert — potentially saving lives.

2. Customer Support

Some call centers now use voice-based emotion detection to sense stress or anger in a customer’s tone.

If frustration rises, the system can immediately route the call to a human agent for empathy and resolution.

3. Education

AI-powered e-learning platforms are experimenting with detecting confusion or boredom in students’ facial expressions — adapting lesson pace or offering extra help in real time.

4. Gaming & Entertainment

Imagine a horror game that senses your fear through your webcam and reacts dynamically — darker lighting, sudden sounds, or even adaptive difficulty.

Emotion AI could make games truly responsive to human emotion.

5. Healthcare

Psychological and wellness apps are beginning to use emotion recognition to track user well-being — detecting early signs of stress, anxiety, or depression.

AI could soon assist therapists by offering emotional analytics over time, complementing human insight.

🧩 The Science Behind the Smile

Emotion detection doesn’t just rely on pattern recognition — it’s built on decades of psychological research.

Paul Ekman’s studies identified six universal emotions — happiness, sadness, anger, fear, disgust, and surprise — recognizable across cultures.

These became the foundation for early facial expression datasets used in training AI models.

Today, deep neural networks like Convolutional Neural Networks (CNNs) and Transformers analyze these visual cues with extraordinary accuracy.

They can even capture micro-expressions lasting less than a fifth of a second — something humans often miss entirely.

🔐 The Ethics and Privacy Debate

As fascinating as Emotion AI sounds, it opens a Pandora’s box of ethical questions.

- Consent: Should machines analyze our emotions without permission?

- Bias: Training datasets often reflect cultural differences and biases. For instance, a smile in one culture may not mean the same in another.

- Misinterpretation: AI detects expressions, not intent. A neutral face might not equal sadness — context matters.

- Surveillance Risks: In workplaces or public spaces, emotion detection could become a tool for monitoring behavior rather than improving experience.

Organizations like the EU AI Act are already drafting guidelines for responsible Emotion AI use, ensuring transparency and fairness.

The challenge ahead is clear: balancing technological possibility with human dignity.

🔮 The Future of Emotionally Intelligent Machines

So, what’s next?

We’re moving toward an era where technology doesn’t just serve us — it understands us.

Imagine:

- Smart homes that play calming music when you’re stressed.

- Virtual tutors that detect confusion and re-explain concepts.

- Healthcare bots that recognize early signs of burnout and suggest breaks.

These aren’t far-off dreams. They’re being tested right now in research labs and startups around the world.

However, the ultimate goal isn’t for AI to replace empathy, but to augment it.

Emotionally aware AI could become an invisible companion that helps humans live more mindful, balanced lives — as long as we build it responsibly.

💡 Try It Yourself — A Fun Experiment!

Want to see how well AI can read your emotions?

Upload a smiling photo to MorphCast MyMoodScan or MoodMe and check the result.

Then, try another photo with a neutral or surprised expression — see how the AI interprets your mood.

You’ll be surprised by how accurate (and sometimes hilariously off) the results can be.

Remember: these tools don’t know you — they just interpret visual patterns.

Still, it’s a powerful demonstration of how far AI has come in understanding human nuance.

⚖️ The Takeaway

Emotion AI blurs the line between technology and empathy.

It shows us that machines can not only see and hear — but begin to feel, in a computational sense.

While the potential is thrilling, the responsibility is equally immense.

How we guide this technology will define whether it becomes a tool for connection or control.

So next time your voice assistant responds cheerfully when you sound tired — just remember, it might actually know.

🏁 Final Thought

AI is learning to understand what makes us human — our emotions, our reactions, our subtleties.

And maybe, as we teach machines to feel, we’ll learn a bit more about our own emotional complexity too.

So here’s the real question:

Would you be comfortable with AI reading your emotions?

Watch our video: