Introduction: A Quiet Revolution in AI

If you’ve ever wondered how AI models are getting better so quickly — without companies spending tens of millions each time they upgrade — you’re about to discover the secret.

It’s not just GPUs.

It’s not just clever data.

It’s a training breakthrough so efficient that it feels almost unfair…

LoRA — Low-Rank Adaptation.

It’s one of the most important innovations in modern AI, yet the average user has no idea it exists.

Today, we’re diving deep into:

- What LoRA really is

- Why it exploded in popularity

- How it lets anyone fine-tune powerful models on their own computer

- Real-world examples

- And the exact steps you can use to try it yourself

Whether you’re a curious enthusiast, a student, or a professional building AI systems, this is a concept that will massively expand your capabilities.

The Problem: Giant Models Are Amazing… and Expensive

Let’s start with a thought experiment.

Imagine you’re training a big model like Llama, Gemma, or GPT-based architectures. These models come with billions of parameters — the numerical knobs the model uses to understand and generate language.

Now imagine you want the model to do something new:

- Write like Shakespeare

- Recognize medical terminology

- Understand legal text

- Answer customer service queries in your organization’s tone

Traditionally, this means you would:

- Retrain the entire model

- On massive GPUs you definitely can’t afford

- For many hours or days

- Consuming massive energy

Total cost?

Sometimes millions of dollars.

For most creators, startups, and solo developers, this is impossible.

So… how do we upgrade large models without retraining them from scratch?

Enter LoRA.

LoRA Explained: The “Add-On Modules” That Change Everything

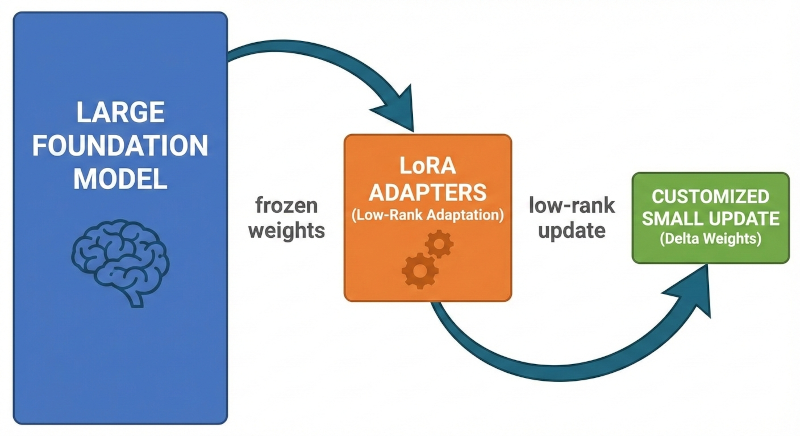

LoRA is built on a surprisingly simple idea:

💡 Instead of retraining the whole model, train only a tiny, compressed set of weights added onto it.

You freeze the original model — don’t touch it — and train small rank-decomposed matrices (the “low-rank adapters”) that sit on top of certain layers.

Think of it like:

- You buy a winter jacket.

- But instead of buying a whole new jacket every time the temperature changes…

- You just add or remove layers: hoodie, rain cover, thermal lining.

The jacket stays the same.

The performance changes.

LoRA does exactly that for neural networks.

Why LoRA Is a Game Changer

Here’s what makes LoRA so unbelievably powerful:

1. 10,000× Less Training Compute

In many cases:

- Full model fine-tuning: billions of parameters

- LoRA: a few million parameters

That’s a reduction so extreme it feels like cheating.

Suddenly, what required a cluster of A100 GPUs can be done on:

- Consumer GPUs

- Apple Silicon (M1/M2/M3)

- CPUs with quantized models

- Cloud machines costing under $1/hour

It democratizes fine-tuning.

2. Multiple Personality Packs for One Model

You can train LoRA modules separately:

- One LoRA for Shakespeare

- One LoRA for coding

- One LoRA for legal writing

- One LoRA for call-center tone

- One LoRA for cooking recipes

Then you dynamically “plug in” whichever LoRA you need.

It’s the AI version of having multiple AI personalities stored as lightweight files.

3. Tiny File Sizes (20MB instead of 4GB)

LoRA adapters are often:

- 5MB

- 20MB

- 50MB

Instead of tens of gigabytes.

You can store hundreds of LoRA variants without filling your disk.

4. Avoids Catastrophic Forgetting

If you fine-tune the whole model and train “AI Shakespeare,” it might forget how to do coding or general reasoning.

LoRA avoids this by:

- Keeping the base model unchanged

- Storing specific adaptations separately

This preserves the original model’s general intelligence.

5. Hugging Face, Kaggle, Colab, Apple Silicon — Everywhere

LoRA’s simplicity made it an industry standard.

Most major frameworks support it:

- Hugging Face PEFT

- 🤗 Transformers

- Apple Silicon (via MPS)

- Intel

- ROCm

- llama.cpp

- GGUF-based workflows

- Fine-tuner UIs like Hugging Face TRL, Axolotl, Oobabooga

LoRA is universal.

Where Did LoRA Come From? A Quick History

LoRA was introduced in 2021 by Microsoft researchers.

Before LoRA:

- Fine-tuning needed full-model backprop

- GPUs like RTX 3090 or A100 were required

- Data centers consumed massive power

After LoRA:

- A single consumer laptop could teach a giant model new tricks

- AI became modular

- Open-source communities exploded in innovation

Then came:

- QLoRA

- DoRA

- ReLoRA

- AdaLoRA

Each improving efficiency, memory usage, or accuracy.

But LoRA remains the foundation — the technique that made personalization of large models accessible to everyone.

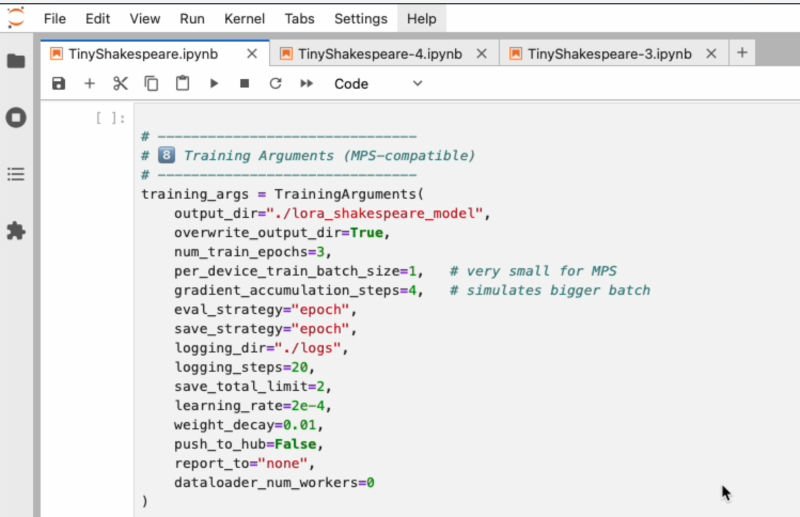

Real Example: Fine-Tuning Gemma-3 or GPT-OSS-20B Using LoRA

To demonstrate LoRA in action, let’s pick a model:

👉 Gemma 3 1B (GGUF)

OR

👉 GPT-OSS-20B (HF version)

And train it on a small dataset like:

Tiny Shakespeare

- Available on Hugging Face

- Lightweight (under 3MB)

- Perfect for demonstrating style transfer

- Produces fun, dramatic outputs

The charming part?

Even with a tiny dataset like this, LoRA can strongly shift the model’s writing style.

You can run a complete training session on:

- MacBook Pro M2/M3

- MPS backend

- 8–16GB RAM

This would be unthinkable for full fine-tuning.

Practical Example Output

After training a LoRA on Tiny Shakespeare, your model might generate:

“Thou speak’st of circuits, wires, and minds unseen,

Yet in thy gaze the spark of wonder lies.”

Suddenly a modern AI model starts sounding like a bard.

All from ~20MB of LoRA weights.

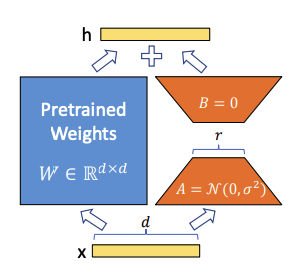

How LoRA Works Internally (Simple Explanation)

Neural networks have giant weight matrices inside attention and feed-forward layers.

LoRA replaces the update of those matrices with:

W + ΔW, where ΔW = A × B

- A = small matrix

- B = small matrix

- Rank (r) is extremely low (like 4 or 8)

This reduces parameters by up to 1000×.

Even more importantly:

Only ΔW (the LoRA parameters) are trained.

W stays frozen.

This prevents overwriting the base model.

LoRA in Real-World Use Cases

LoRA isn’t just a hobbyist tool. It’s used widely in industry:

Customer Service

Companies fine-tune LLMs to speak in their brand voice.

Education

LLMs fine-tuned to teach like a specific instructor.

Healthcare

Models adapted to understand medical terminology without retraining the base model.

Code Assistants

LoRA allows specialization — Python, Rust, DevOps each get their own LoRA.

Creative Writing

Storytelling tone packs

Anime LoRA

Historical LoRA

Sci-fi LoRA

Enterprises

Internal corporate knowledge LoRAs

Security LoRAs

Privacy-compliant LoRAs

This technique is a core reason LLMs are so flexible.

Trying It Yourself: Quick Start

If you want a practical way to run LoRA fine-tuning at home, here is the easiest workflow:

1. Choose Your Model

For Apple Silicon:

- Gemma 3 1B

- Llama 3.2 1B

- GPT-OSS-20B (quantized for MPS)

2. Choose Your Dataset

Example:

- Tiny Shakespeare

- Alpaca dataset

- Custom JSON conversations

3. Use Hugging Face PEFT (Python)

- Load model

- Attach LoRA layers

- Train adapter

- Save LoRA file

4. Apply LoRA at Runtime

- Load base model

- Inject LoRA

- Generate text

In seconds, your model transforms.

Why LoRA Will Shape the Future of Personalized AI

The coming age of AI is not about one giant model for everyone.

It’s about:

- Personal AI

- Corporate AI

- Task-specific AI

- Local AI models

- Small AI with big skills

LoRA is the backbone enabling this shift.

In the near future, you won’t download entire new models — you’ll download:

- LoRA writing skills

- LoRA reasoning modules

- LoRA coding boosts

- LoRA personas

- LoRA domain expertise

All applied on top of a stable base model running on your device.

Conclusion: LoRA Is the Secret Layer Powering the AI Revolution

LoRA transformed the AI world quietly and elegantly.

It:

- Makes training accessible

- Saves massive compute

- Keeps base models stable

- Unlocks personalization

- Democratizes advanced AI

If you’re building AI tools, content, or workflows — LoRA isn’t just useful.

It’s essential.

This tiny, clever trick is enabling a future where AI is:

- More personal

- More adaptive

- Cheaper to train

- Easier to modify

- And available to everyone

And we’re only at the beginning.

Watch our video: