Introduction: When Smart AI Gets It Wrong

You’ve probably seen it happen.

You ask an AI a simple question — maybe about the latest medical discovery, a complex law, or even a new technology trend. It responds instantly and confidently.

But then, you double-check.

And realize… it was completely wrong.

That’s the frustrating reality of modern AI. Large language models (LLMs) like ChatGPT, Gemini, or Claude are trained on massive datasets, yet they sometimes hallucinate — confidently generating false or outdated information.

Now imagine an AI that never makes things up, because it doesn’t rely on memory alone. Instead, it retrieves real information from trusted sources before giving you an answer.

That’s the magic of Retrieval-Augmented Generation, or RAG — and it’s quietly revolutionizing how AI thinks, learns, and explains.

What Is Retrieval-Augmented Generation (RAG)?

At its core, RAG is a simple but powerful idea.

It merges two worlds of AI:

- Retrieval — the ability to search and find relevant, factual data.

- Generation — the ability to write and explain using natural language.

When these two work together, the AI becomes both knowledgeable and articulate.

Let’s break it down.

Traditional AI models generate answers from the patterns they learned during training. If a fact wasn’t part of that training data, the AI might just guess.

RAG, on the other hand, first performs a retrieval step — pulling in the most relevant documents or facts from external databases, APIs, or your private knowledge base. Then, it uses those facts during the generation step, crafting a fluent, accurate answer.

In short: RAG turns an AI’s imagination into intelligence.

A Simple Analogy: The Student and the Library

Think of an LLM as a talented student.

It can write beautifully, summarize complex topics, and sound incredibly confident.

But… it doesn’t always remember its facts correctly.

Now, give that same student access to a library. Before writing an essay, they can look up reliable references, verify their claims, and cite sources.

Suddenly, the essays are accurate and insightful.

That’s what RAG does for AI — it gives models a “library card.” Instead of relying only on what they “remember,” they can look up real data before answering.

Why RAG Is a Game-Changer

RAG isn’t just a technical improvement — it’s a trust revolution for AI.

Here’s why it matters so much:

1. Accuracy: No More Hallucinations

Traditional models sometimes generate false statements that sound right. RAG fixes that by pulling from verifiable, factual sources before generating a response.

This means an AI built on RAG is fact-checked by design.

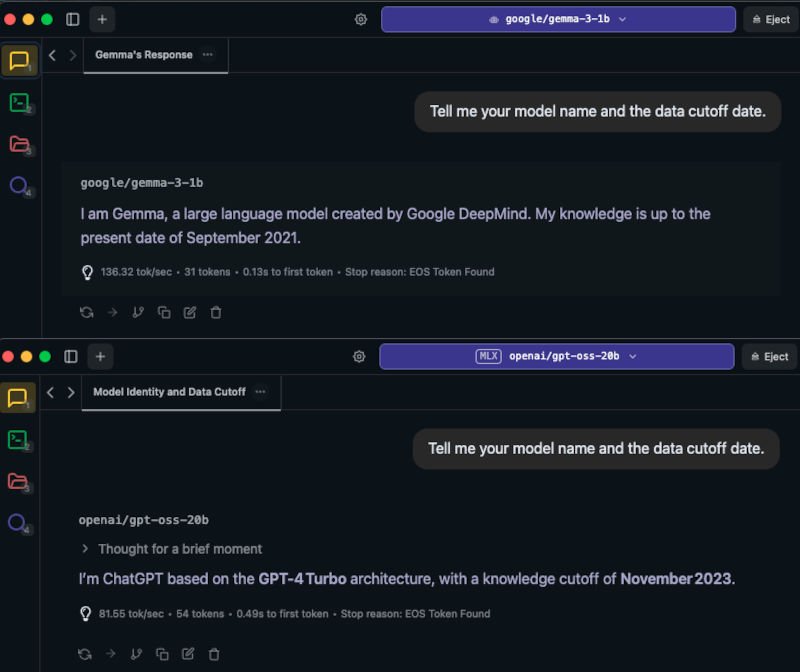

2. Freshness: Real-Time Knowledge

Most language models are trained once, then frozen in time. Their “knowledge cutoff” might be 2023, 2024… and that’s it.

RAG breaks that limitation. It connects to live data — APIs, databases, or even the web — meaning it can always access the latest information.

So if you ask a RAG-powered AI about a 2025 event or a new research paper, it can find it now, not guess from memory.

3. Customization: Your AI, Your Data

Imagine training ChatGPT on your company’s knowledge base, customer FAQs, and internal policies. That’s what RAG enables.

You can connect an AI to your own data sources — PDFs, Google Docs, Notion pages, or product manuals — and get answers that reflect your specific context.

That’s why enterprise AI assistants, research copilots, and content automation tools are all adopting RAG.

4. Transparency: Answers You Can Trust

RAG systems can include source citations, so users can verify where the information came from.

In other words: you don’t just get an answer — you get proof.

Under the Hood: How RAG Actually Works

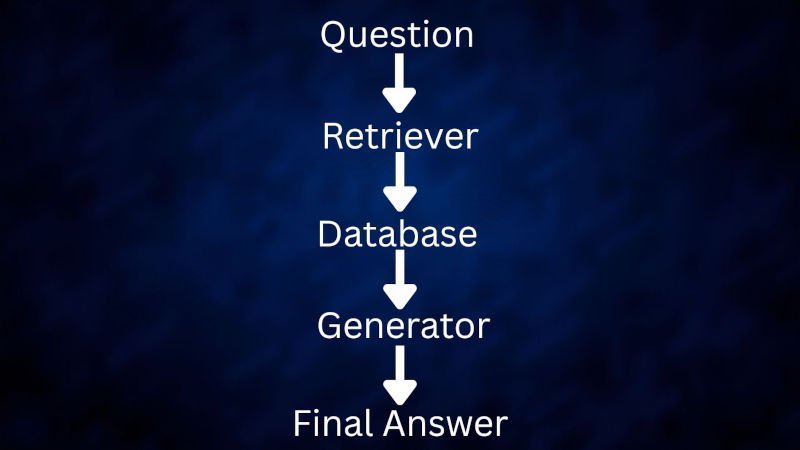

Let’s simplify the technical flow of RAG without diving too deep into code.

- You Ask a Question

→ “What are the environmental benefits of electric aviation?” - Retriever Gets to Work

→ The system searches through a vector database like Pinecone, FAISS, or Weaviate. These databases don’t store plain text—they store embeddings, numerical representations of text that capture meaning. - Relevant Information Is Retrieved

→ The retriever finds the most similar pieces of text (called “chunks”) from the database. - Generator Creates the Final Answer

→ The LLM uses these chunks as context and writes a cohesive, natural answer based on them.

The result? An answer that’s fluent, up-to-date, and factually grounded.

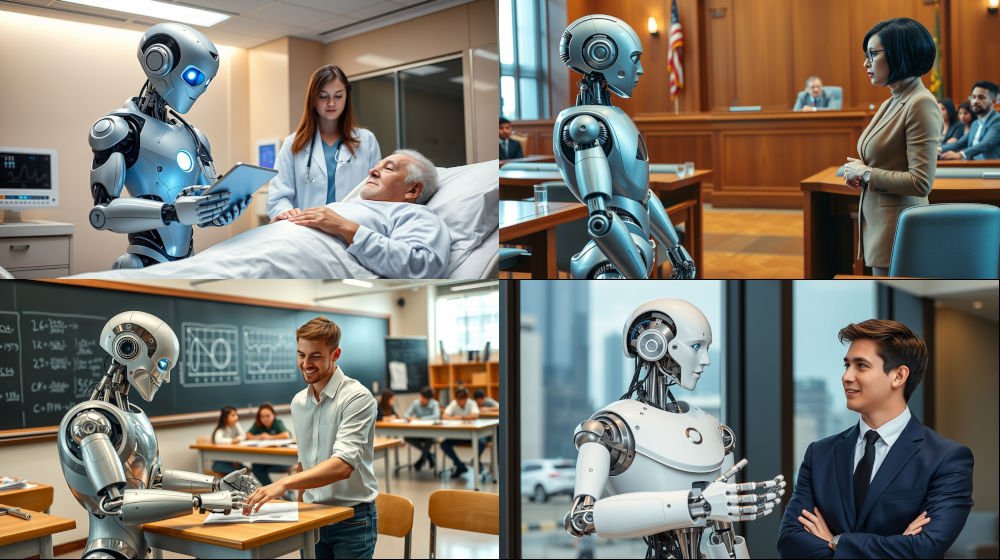

Real-World Applications of RAG

RAG isn’t theoretical—it’s already shaping industries across the globe.

Healthcare

RAG-powered systems can summarize clinical studies, provide real-time medical insights, and support doctors with evidence-based recommendations.

Legal

Law firms use RAG to search and summarize case laws, contracts, and rulings with pinpoint accuracy.

Education

Students and teachers use RAG-driven assistants to find relevant academic papers and summarize key insights.

Enterprise

Customer support bots can pull directly from company manuals or databases, providing accurate answers instantly.

Research & Journalism

RAG is helping researchers synthesize knowledge across thousands of papers — in minutes, not weeks.

How to Master RAG — Step by Step

Now that you understand why RAG matters, let’s talk about how you can master it.

Whether you’re a student, developer, or professional, this roadmap will help you get hands-on with RAG.

Step 1: Understand the Basics

Start with the fundamentals:

- Learn about embeddings — how text is turned into numbers that represent meaning.

- Understand vector databases like FAISS, Pinecone, or Weaviate.

- Explore how similarity search helps retrieve relevant data.

Step 2: Get Practical

Tools like LangChain, LlamaIndex, or Haystack make building RAG systems easier than ever.

Start small:

Upload PDFs or documents, and let your AI answer questions about them.

It’s surprisingly intuitive — and incredibly powerful.

Step 3: Optimize the Pipeline

Once you get comfortable, experiment with:

- Chunking: breaking documents into small, meaningful sections for better retrieval.

- Retriever types: dense vs. sparse search.

- Rerankers: improve accuracy by ranking the most relevant results higher.

This is where RAG goes from good to great.

Step 4: Build Real Projects

Apply what you learn by building something useful:

- A personal knowledge assistant that searches your notes.

- A research summarizer for academic papers.

- A company chatbot that knows your internal docs.

These projects help you understand not just how RAG works—but why it matters.

Step 5: Stay Ahead of the Curve

AI evolves fast.

Follow research papers on RAG improvements, hybrid retrieval systems, and emerging frameworks.

Join online AI communities experimenting with retrieval workflows — you’ll always learn faster with others.

Remember: mastering RAG isn’t about memorizing concepts. It’s about building trustworthy systems that combine human creativity with factual grounding.

The Future of AI Is Grounded in Reality

As AI continues to grow more powerful, one truth becomes clear: intelligence without grounding is dangerous.

RAG represents the next step — AIs that not only sound intelligent but are intelligent, because they rely on evidence, not guesses.

Soon, every serious AI product — from customer support bots to autonomous research assistants — will have some form of RAG built in.

It’s the difference between believable AI and trustworthy AI.

And for creators, developers, and tech professionals — mastering RAG now means mastering the foundation of the next generation of AI systems.

Final Thoughts

AI used to be about mimicking human speech.

Now, with RAG, it’s about mimicking human reasoning.

By combining retrieval and generation, we’ve finally bridged the gap between imagination and truth.

So the next time you ask your AI a complex question and get an answer that feels both smart and accurate — chances are, there’s a little bit of RAG behind it.

Watch our video: